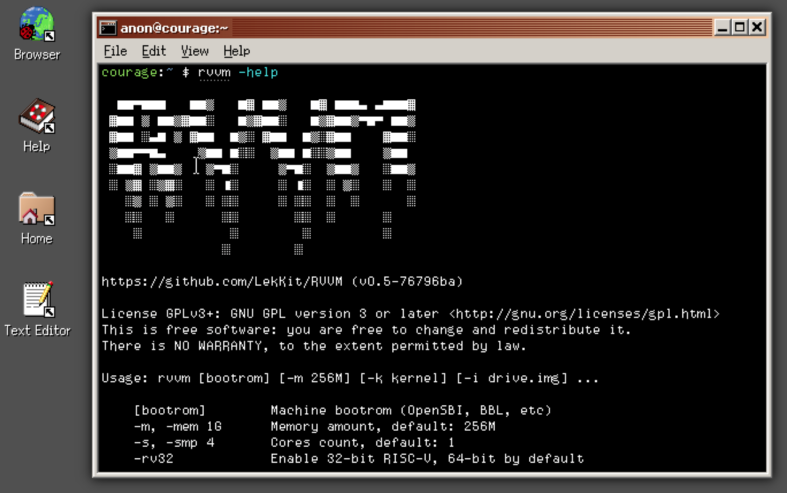

RVVM - The RISC-V Virtual Machine 0.6

A RISC-V virtual machine is a software-based emulation of a computer system that uses the RISC-V instruction set architecture (ISA). It allows software developers to test and debug their RISC-V code without the need for physical hardware. A RISC-V virtual machine typically consists of an emulator that interprets RISC-V instructions and simulates the hardware behavior, as well as a virtual memory system and various other components of a computer system.

cd Ports/rvvm

./package.sh

This description was automatically generated by ChatGPT. Feel free to add a accurate human-made description!

This description was automatically generated by ChatGPT. Feel free to add a accurate human-made description!

A RISC-V virtual machine (VM) is a software environment that emulates the behavior of a RISC-V processor on a different computer architecture. A RISC-V processor is a type of processor architecture that is designed to be simple and efficient, with a reduced instruction set computing (RISC) approach.

A RISC-V VM can be useful in a variety of scenarios, such as for software development and testing, or for running legacy software on new hardware. It can also be used in cloud computing and data center environments, where multiple VMs can be run on a single physical machine.

There are several different implementations of RISC-V virtual machines available, both open source and proprietary. Some popular examples include QEMU, Spike, and VirtualBox. These VMs provide a layer of abstraction between the software running on the virtual machine and the underlying hardware, allowing the software to run without modification.

RISC-V VMs typically include a variety of features, such as virtual memory management, instruction set emulation, and hardware virtualization support. They can also provide features such as networking and file system support, allowing the virtual machine to interact with the host operating system and other virtual machines running on the same physical machine.

One important consideration when using a RISC-V VM is performance. Because the virtual machine is emulating a different processor architecture, there may be some performance overhead compared to running the software natively. However, with modern hardware and software optimizations, the performance overhead can often be minimized.

Website: https://github.com/LekKit/RVVM

Port: https://github.com/SerenityOS/serenity/tree/master/Ports/rvvm